Documentation Index

Fetch the complete documentation index at: https://docs.apiyi.com/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Trae is an AI-native IDE launched by ByteDance in January 2025, positioned as a “Vibe Coding” productivity tool for professional developers — describe what you want in natural language and the AI handles completion, bug fixing, project scaffolding, and one-click preview. Trae ships in two flavors: TRAE CN (trae.cn) and the international TRAE (trae.ai), with the SOLO series (Desktop / App / Web) letting the agent take over the full task lifecycle.

By wiring APIYI in through Trae’s “Custom Model” feature, you get:

🔌 Dual-Protocol Coverage

🤖 400+ Models

💰 5% Off on Claude

🛡️ Stable Direct Connect

api.apiyi.com is directly reachable from mainland China — no extra proxy needed- 🔗 International:

www.trae.ai - 🔗 China edition:

www.trae.cn - 👥 Developer: ByteDance

- 📅 First released: January 2025

- 🧩 Modes: Builder (agent) / Chat (sidebar) / Inline Chat

- 🌐 Protocols supported: OpenAI, Anthropic, plus many other third-party providers

Core Features

Three Interaction Modes

- Builder mode: agent takes over — reads/writes files, runs commands, scaffolds projects

- Chat mode: sidebar conversation, similar to Cursor Chat / Cline, great for Q&A and snippets

- Inline Chat:

Cmd/Ctrl + Iopens an in-editor inline conversation — fastest path for completion and refactoring

MCP and Tooling Ecosystem

- Built-in MCP (Model Context Protocol) support for external tools and APIs

- Remote-SSH support — remote dev feels identical to local

.rulesfiles for project-level AI behavior

Custom Model (focus of this guide)

The international Trae ships presets for Anthropic, OpenAI, Gemini, xAI, OpenRouter, Ollama, DeepSeek, Volcano Engine, Aliyun, Tencent Cloud, SiliconFlow, PPIO, Novita, BytePlus and more. Every preset lets you fill in a custom model ID + API key + custom request URL — that’s the entry point we’ll use to plug APIYI in.Quick Start

Step 1: Install Trae

- International (TRAE)

- China edition (TRAE CN)

www.trae.ai — supports macOS, Windows, Linux. The international build ships GPT / Claude / Gemini presets out of the box.Step 2: Get an APIYI Token

- Visit the APIYI token console:

api.apiyi.com/token - Click “New Token”

- For Claude-heavy usage: pick the ClaudeCode group — Claude calls get 5% off, stackable with the 10%-20% top-up bonus

- For mixed GPT/Gemini/DeepSeek usage: the Default group is fine

- Copy the

sk-prefixed key

Step 3: Open the Custom Model Panel in Trae

- IDE mode: click the ⚙️ icon in the top-right → Models in the left nav → “Add model” / “Custom model”

- SOLO mode: click ⚙️ in the top-right of the chat panel → Models → Add

Step 4: Add the OpenAI-Protocol Entry (GPT / Gemini / DeepSeek / Doubao, etc.)

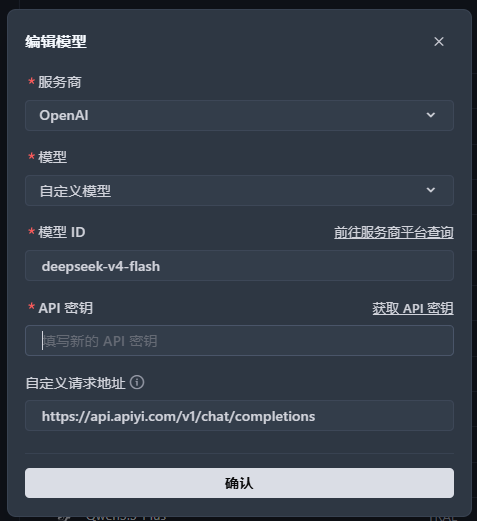

Fill in as shown below. The custom request URL must include the full/v1/chat/completions path — not just the domain:

| Field | Value | Notes |

|---|---|---|

| Provider | OpenAI | Pick the OpenAI preset |

| Model | Custom Model | Last entry in the dropdown |

| Model ID | e.g. gpt-5.1, deepseek-v4-flash, gemini-3-pro-preview | Full ID of the model you want |

| API Key | sk-... | Paste the APIYI token from Step 2 |

| Custom Request URL | https://api.apiyi.com/v1/chat/completions | Must include /v1/chat/completions |

Step 5: Add the Anthropic-Protocol Entry (Claude family)

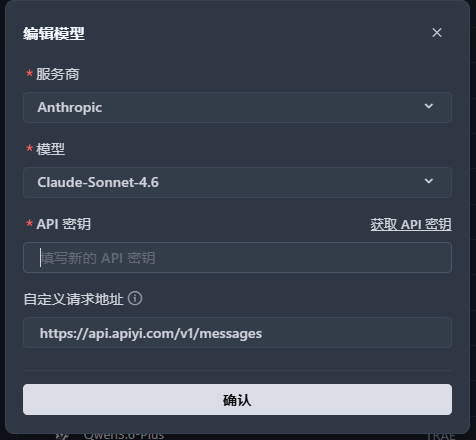

If you also want Claude Opus 4.6 / Sonnet 4.6 / Haiku 4.5, add a second provider entry:

| Field | Value | Notes |

|---|---|---|

| Provider | Anthropic | Pick the Anthropic preset |

| Model | Claude-Sonnet-4.6 (or another Claude version in the dropdown) | Use the official preset — no need to go to “Custom model” |

| API Key | sk-... | Paste the APIYI token (ideally one from the ClaudeCode group) |

| Custom Request URL | https://api.apiyi.com/v1/messages | Must include /v1/messages — note this is NOT /v1/chat/completions |

/v1/chat/completions; Anthropic protocol goes through /v1/messages. APIYI hosts both endpoints, so the same token can be bound to both Trae provider entries simultaneously without conflict.Step 6: Switch Models and Start Coding

Back in the editor, click the model dropdown at the top — both providers and all of their models will appear. Pick one and start chatting or enter Builder mode.Recommended Model Lineups

Daily Coding (best value)

Complex Architecture (flagship)

Deep Reasoning

Cost-Optimized (CN models)

See the full model list and coding recommendations

Pro Tips

Keep both provider entries

Can't find the latest model in the dropdown?

Prefer Claude for Builder mode

Add the -thinking suffix for hard tasks

-thinking to the model ID (e.g. claude-sonnet-4-6-thinking) to force chain-of-thought. Markedly reduces hallucinations on architecture decisions and security audits in Builder mode.FAQ

TRAE CN vs international TRAE — any difference for APIYI integration?

TRAE CN vs international TRAE — any difference for APIYI integration?

trae.cn) if you’re in mainland China, pick international TRAE (trae.ai) for global teams or when you need the overseas preset models.Why does the baseURL need to go all the way to /v1/chat/completions?

Why does the baseURL need to go all the way to /v1/chat/completions?

/chat/completions.Correct:- OpenAI protocol:

https://api.apiyi.com/v1/chat/completions - Anthropic protocol:

https://api.apiyi.com/v1/messages

- ❌

https://api.apiyi.com - ❌

https://api.apiyi.com/v1

Can I use 'Custom Model' under the Anthropic provider to type any model ID?

Can I use 'Custom Model' under the Anthropic provider to type any model ID?

claude-opus-4-6 / claude-sonnet-4-6-thinking / claude-haiku-4-5-20251001 directly. APIYI’s /v1/messages endpoint is fully compatible with official model IDs.How do I get the 5% Claude discount?

How do I get the 5% Claude discount?

api.apiyi.com/token, pick the ClaudeCode group — Claude calls automatically get 5% off, stackable with the 10%-20% top-up bonus.Paste this ClaudeCode-group token into the Anthropic provider entry in Trae and the discount applies automatically.Why don't I see GPT-5.1 / Claude 4.6 / latest models in Trae?

Why don't I see GPT-5.1 / Claude 4.6 / latest models in Trae?

Builder mode keeps hanging / tool calls fail

Builder mode keeps hanging / tool calls fail

- Prefer Claude Sonnet 4.6 or Opus 4.6: they’re notably more reliable in tool-call workflows

- Avoid small non-reasoning models: DeepSeek-Chat / smaller Qwen variants can loop in Builder mode — switch to the

thinkingvariant - Watch context length: for large multi-file changes switch to Opus 4.6 (200K context)

- Check APIYI live status: occasional upstream wobbles affect all clients — confirm it’s not a channel issue

What about Trae's telemetry / data upload?

What about Trae's telemetry / data upload?

- Allow-listing at the corporate egress

- Redacting sensitive snippets before entering Builder mode

- Choosing open-source / auditable clients like Claude Code or Cline as an alternative

Trae vs Cursor / Cline / Claude Code — how do I pick?

Trae vs Cursor / Cline / Claude Code — how do I pick?

| Tool | Type | Agent mode | APIYI integration | Best for |

|---|---|---|---|---|

| Trae | Standalone IDE | ✅ Builder | Medium (two entries for dual protocol) | Cursor-style UX with strong Chinese support |

| Cursor | Standalone IDE | ❌ (Chat only) | Easy (OpenAI protocol only) | Best-in-class completion and diff preview |

| Cline | VS Code plugin | ✅ | Easy | Already a heavy VS Code user |

| Claude Code | CLI | ✅ | Easy | Terminal workflow, CI, remote dev |

How to debug 401 / 403 errors?

How to debug 401 / 403 errors?

- Verify the API key starts with

sk-and has no extra whitespace - Verify the baseURL is correct — especially the trailing path (

/v1/chat/completionsvs/v1/messages) - In the APIYI console, check the token is enabled and the target model is bound to the token’s group

- Insufficient balance can also surface as 401 — double-check the account balance

Related Resources

Model Recommendations

APIYI Console

Cursor Integration

Cline Plugin

api.apiyi.com or join the official community for technical support.